Design for the Realities of the Edge, Not the Lab

Design for the Realities of the Edge, Not the Lab

Design for the Realities of the Edge, Not the Lab

Edge AI fails in production when architectures assume reliable connectivity. Edge-First design decouples operations from the cloud so systems run when networks don't.

Edge AI fails in production when architectures assume reliable connectivity. Edge-First design decouples operations from the cloud so systems run when networks don't.

Edge AI fails in production when architectures assume reliable connectivity. Edge-First design decouples operations from the cloud so systems run when networks don't.

by

by

Steve Yates, P.E. (VA), CEO and Co-Founder, Federant

Steve Yates, P.E. (VA), CEO and Co-Founder, Federant

Steve Yates, P.E. (VA), CEO and Co-Founder, Federant

4 minutes

4 minutes

4 minutes

Industry

Industry

Industry

Industry

If you want to succeed with Edge AI, you need Edge-First architecture.

As we’ll discuss at my upcoming AIoT World Expo session, many platforms and frameworks for the ‘edge’ assume 100% reliable network access that just simply doesn't exist at factory floors, agricultural operations, and remote industrial sites.

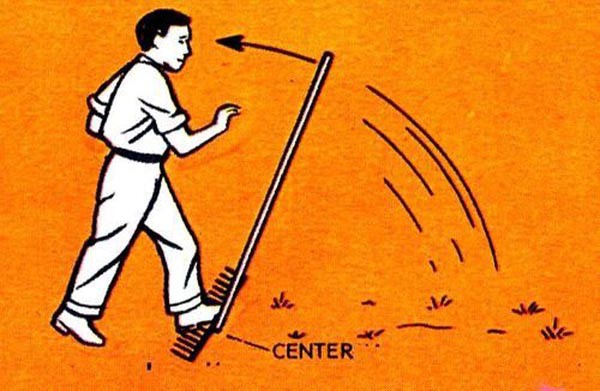

It’s like we, as an industry, keep stepping on the same rake.

At first, we tried to solve security with tamper-proof hardware before moving to zero-trust architectures. We relied on perimeter security until distributed systems made perimeters irrelevant. We treated RAID as data protection until recovery and backup became primary design concerns. In each case, the mistake was the same: hardening one layer instead of fixing the real architectural issue.

Edge AI’s dependence on continuous cloud connectivity is no different. This solution isn't about better networks or more bandwidth. It’s about decoupling correctness, trust, control, and decision-making from something that is not always available and that we don't control.

This isn’t a rejection of the cloud. Cloud capabilities are essential, but they must be additive, not gating. That’s what Edge-First design really means.

So let’s take a deeper look into the key architectural considerations.

Uplink Constraints Will Break Cloud-Dependent Architectures Before Scale

Today's networks were built for a downlink-heavy world: delivering content to humans consuming videos and downloading files. They weren't built to provide uplink-heavy connectivity for thousands of AIoT devices streaming sensor telemetry, images, and video upstream to cloud data centers for inference.

Gartner estimated that 75 percent of enterprise data was created and processed at edge locations in 2025. Yet the infrastructure serving those locations was designed for lightweight telemetry and occasional synchronization, not continuous inference workloads.

The math just doesn't work.

However, ubiquitous satellite connectivity has genuinely changed what's possible at the remote edge. And it has also exposed a new challenge. It’s no longer a question of raw connectivity. It's now about the capability to deploy, secure, and operate AIoT solutions where power is unreliable, environments are harsh, and the nearest support team is hundreds of miles away.

Non-terrestrial networks don’t solve the problem. They just make the systems problem more obvious.

Frameworks Solve the Model Problem but Not the Architecture Problem

TensorFlow Lite, PyTorch Mobile, and ONNX Runtime are genuinely useful tools for model optimization, quantization, and efficient inference on resource-constrained hardware. But model efficiency alone can't address the operational reality of deploying AI where it actually needs to run.

The models work. Sure.

The use cases deliver value in demonstrations. Absolutely.

But what fails is the assumption that cloud-first architectures can extend to environments where connectivity is intermittent and latency requirements can't tolerate round trips to distant data centers.

Full-stack AI development is a huge problem, and you won't solve it alone without best-of-breed solutions and open developer communities. Selecting the right inference framework is maybe ten percent of the deployment challenge.

The other ninety percent is, well, everything else: how the system recovers when updates fail; how it operates when connectivity drops; how it manages power when running off solar; how it gets maintained when the nearest trained technician is a four-hour drive away.

Power and Recovery Define Edge Success More Than Compute Performance

Conference sessions about edge AI focus on TOPS, inference benchmarks, and model accuracy. These metrics matter, but they're not what determines whether a deployment succeeds in production.

Remember–in the remote desert, you're not running in a gigawatt-class data center. You're running off solar or battery, and every watt counts. The systems have to survive extremes of vibration, weather, and dust. And nobody's coming to reboot when something goes wrong.

Secure remote recovery, self-healing, and zero-touch provisioning become critical capabilities rather than nice-to-have features. A single truck roll to a remote location can cost thousands of dollars in technician time, travel, and lost productivity. Multiply that across thousands of devices receiving updates simultaneously, and deployment failures become prohibitive.

That’s why hardware-rooted recovery makes aggressive update schedules not just feasible, but necessary to avoid costly physical intervention.

Cloud-Optional Architecture Converts Pilot Demonstrations into Production Systems

Most enterprise AI pilots never reach production, according to various surveys—S&P Global, IDC—due to scalability challenges. Breaking the gridlock requires infrastructure rebuilt around a different premise: Edge-First design where cloud connectivity is optional.

This isn't about rejecting the cloud. Cloud infrastructure provides genuine value for model training, fleet-wide analytics, and centralized management. But the control plane, data plane, and decision plane can't all depend on continuous cloud availability. Predictive maintenance systems monitoring rotating equipment have to detect anomalies in real time regardless of satellite availability. Safety monitoring in manufacturing can't wait for cloud round trips.

The architecture I'm describing inverts the traditional cloud model. Instead of streaming sensor data to centralized infrastructure, edge nodes run inference locally and store results for later synchronization. When connectivity is available, the system uploads telemetry, downloads model updates, and reconciles state. When connectivity fails, operations continue uninterrupted.

That’s what Edge-First design really means.

What I've observed over three decades is that the most underserved locations are the most in need of digital transformation. They feed, power, transport, supply, and protect all of us. Edge AI isn't waiting for 6G or standards committees to catch up. It's being built by those closest to the problem, extending intelligence and resilience to the furthest edges of the real world.

Steve Yates is CEO and Co-Founder of Federant, an open edge-first hardware and software platform for deploying and managing intelligent applications in harsh environments. Learn more at federant.com.

If you want to succeed with Edge AI, you need Edge-First architecture.

As we’ll discuss at my upcoming AIoT World Expo session, many platforms and frameworks for the ‘edge’ assume 100% reliable network access that just simply doesn't exist at factory floors, agricultural operations, and remote industrial sites.

It’s like we, as an industry, keep stepping on the same rake.

At first, we tried to solve security with tamper-proof hardware before moving to zero-trust architectures. We relied on perimeter security until distributed systems made perimeters irrelevant. We treated RAID as data protection until recovery and backup became primary design concerns. In each case, the mistake was the same: hardening one layer instead of fixing the real architectural issue.

Edge AI’s dependence on continuous cloud connectivity is no different. This solution isn't about better networks or more bandwidth. It’s about decoupling correctness, trust, control, and decision-making from something that is not always available and that we don't control.

This isn’t a rejection of the cloud. Cloud capabilities are essential, but they must be additive, not gating. That’s what Edge-First design really means.

So let’s take a deeper look into the key architectural considerations.

Uplink Constraints Will Break Cloud-Dependent Architectures Before Scale

Today's networks were built for a downlink-heavy world: delivering content to humans consuming videos and downloading files. They weren't built to provide uplink-heavy connectivity for thousands of AIoT devices streaming sensor telemetry, images, and video upstream to cloud data centers for inference.

Gartner estimated that 75 percent of enterprise data was created and processed at edge locations in 2025. Yet the infrastructure serving those locations was designed for lightweight telemetry and occasional synchronization, not continuous inference workloads.

The math just doesn't work.

However, ubiquitous satellite connectivity has genuinely changed what's possible at the remote edge. And it has also exposed a new challenge. It’s no longer a question of raw connectivity. It's now about the capability to deploy, secure, and operate AIoT solutions where power is unreliable, environments are harsh, and the nearest support team is hundreds of miles away.

Non-terrestrial networks don’t solve the problem. They just make the systems problem more obvious.

Frameworks Solve the Model Problem but Not the Architecture Problem

TensorFlow Lite, PyTorch Mobile, and ONNX Runtime are genuinely useful tools for model optimization, quantization, and efficient inference on resource-constrained hardware. But model efficiency alone can't address the operational reality of deploying AI where it actually needs to run.

The models work. Sure.

The use cases deliver value in demonstrations. Absolutely.

But what fails is the assumption that cloud-first architectures can extend to environments where connectivity is intermittent and latency requirements can't tolerate round trips to distant data centers.

Full-stack AI development is a huge problem, and you won't solve it alone without best-of-breed solutions and open developer communities. Selecting the right inference framework is maybe ten percent of the deployment challenge.

The other ninety percent is, well, everything else: how the system recovers when updates fail; how it operates when connectivity drops; how it manages power when running off solar; how it gets maintained when the nearest trained technician is a four-hour drive away.

Power and Recovery Define Edge Success More Than Compute Performance

Conference sessions about edge AI focus on TOPS, inference benchmarks, and model accuracy. These metrics matter, but they're not what determines whether a deployment succeeds in production.

Remember–in the remote desert, you're not running in a gigawatt-class data center. You're running off solar or battery, and every watt counts. The systems have to survive extremes of vibration, weather, and dust. And nobody's coming to reboot when something goes wrong.

Secure remote recovery, self-healing, and zero-touch provisioning become critical capabilities rather than nice-to-have features. A single truck roll to a remote location can cost thousands of dollars in technician time, travel, and lost productivity. Multiply that across thousands of devices receiving updates simultaneously, and deployment failures become prohibitive.

That’s why hardware-rooted recovery makes aggressive update schedules not just feasible, but necessary to avoid costly physical intervention.

Cloud-Optional Architecture Converts Pilot Demonstrations into Production Systems

Most enterprise AI pilots never reach production, according to various surveys—S&P Global, IDC—due to scalability challenges. Breaking the gridlock requires infrastructure rebuilt around a different premise: Edge-First design where cloud connectivity is optional.

This isn't about rejecting the cloud. Cloud infrastructure provides genuine value for model training, fleet-wide analytics, and centralized management. But the control plane, data plane, and decision plane can't all depend on continuous cloud availability. Predictive maintenance systems monitoring rotating equipment have to detect anomalies in real time regardless of satellite availability. Safety monitoring in manufacturing can't wait for cloud round trips.

The architecture I'm describing inverts the traditional cloud model. Instead of streaming sensor data to centralized infrastructure, edge nodes run inference locally and store results for later synchronization. When connectivity is available, the system uploads telemetry, downloads model updates, and reconciles state. When connectivity fails, operations continue uninterrupted.

That’s what Edge-First design really means.

What I've observed over three decades is that the most underserved locations are the most in need of digital transformation. They feed, power, transport, supply, and protect all of us. Edge AI isn't waiting for 6G or standards committees to catch up. It's being built by those closest to the problem, extending intelligence and resilience to the furthest edges of the real world.

Steve Yates is CEO and Co-Founder of Federant, an open edge-first hardware and software platform for deploying and managing intelligent applications in harsh environments. Learn more at federant.com.

If you want to succeed with Edge AI, you need Edge-First architecture.

As we’ll discuss at my upcoming AIoT World Expo session, many platforms and frameworks for the ‘edge’ assume 100% reliable network access that just simply doesn't exist at factory floors, agricultural operations, and remote industrial sites.

It’s like we, as an industry, keep stepping on the same rake.

At first, we tried to solve security with tamper-proof hardware before moving to zero-trust architectures. We relied on perimeter security until distributed systems made perimeters irrelevant. We treated RAID as data protection until recovery and backup became primary design concerns. In each case, the mistake was the same: hardening one layer instead of fixing the real architectural issue.

Edge AI’s dependence on continuous cloud connectivity is no different. This solution isn't about better networks or more bandwidth. It’s about decoupling correctness, trust, control, and decision-making from something that is not always available and that we don't control.

This isn’t a rejection of the cloud. Cloud capabilities are essential, but they must be additive, not gating. That’s what Edge-First design really means.

So let’s take a deeper look into the key architectural considerations.

Uplink Constraints Will Break Cloud-Dependent Architectures Before Scale

Today's networks were built for a downlink-heavy world: delivering content to humans consuming videos and downloading files. They weren't built to provide uplink-heavy connectivity for thousands of AIoT devices streaming sensor telemetry, images, and video upstream to cloud data centers for inference.

Gartner estimated that 75 percent of enterprise data was created and processed at edge locations in 2025. Yet the infrastructure serving those locations was designed for lightweight telemetry and occasional synchronization, not continuous inference workloads.

The math just doesn't work.

However, ubiquitous satellite connectivity has genuinely changed what's possible at the remote edge. And it has also exposed a new challenge. It’s no longer a question of raw connectivity. It's now about the capability to deploy, secure, and operate AIoT solutions where power is unreliable, environments are harsh, and the nearest support team is hundreds of miles away.

Non-terrestrial networks don’t solve the problem. They just make the systems problem more obvious.

Frameworks Solve the Model Problem but Not the Architecture Problem

TensorFlow Lite, PyTorch Mobile, and ONNX Runtime are genuinely useful tools for model optimization, quantization, and efficient inference on resource-constrained hardware. But model efficiency alone can't address the operational reality of deploying AI where it actually needs to run.

The models work. Sure.

The use cases deliver value in demonstrations. Absolutely.

But what fails is the assumption that cloud-first architectures can extend to environments where connectivity is intermittent and latency requirements can't tolerate round trips to distant data centers.

Full-stack AI development is a huge problem, and you won't solve it alone without best-of-breed solutions and open developer communities. Selecting the right inference framework is maybe ten percent of the deployment challenge.

The other ninety percent is, well, everything else: how the system recovers when updates fail; how it operates when connectivity drops; how it manages power when running off solar; how it gets maintained when the nearest trained technician is a four-hour drive away.

Power and Recovery Define Edge Success More Than Compute Performance

Conference sessions about edge AI focus on TOPS, inference benchmarks, and model accuracy. These metrics matter, but they're not what determines whether a deployment succeeds in production.

Remember–in the remote desert, you're not running in a gigawatt-class data center. You're running off solar or battery, and every watt counts. The systems have to survive extremes of vibration, weather, and dust. And nobody's coming to reboot when something goes wrong.

Secure remote recovery, self-healing, and zero-touch provisioning become critical capabilities rather than nice-to-have features. A single truck roll to a remote location can cost thousands of dollars in technician time, travel, and lost productivity. Multiply that across thousands of devices receiving updates simultaneously, and deployment failures become prohibitive.

That’s why hardware-rooted recovery makes aggressive update schedules not just feasible, but necessary to avoid costly physical intervention.

Cloud-Optional Architecture Converts Pilot Demonstrations into Production Systems

Most enterprise AI pilots never reach production, according to various surveys—S&P Global, IDC—due to scalability challenges. Breaking the gridlock requires infrastructure rebuilt around a different premise: Edge-First design where cloud connectivity is optional.

This isn't about rejecting the cloud. Cloud infrastructure provides genuine value for model training, fleet-wide analytics, and centralized management. But the control plane, data plane, and decision plane can't all depend on continuous cloud availability. Predictive maintenance systems monitoring rotating equipment have to detect anomalies in real time regardless of satellite availability. Safety monitoring in manufacturing can't wait for cloud round trips.

The architecture I'm describing inverts the traditional cloud model. Instead of streaming sensor data to centralized infrastructure, edge nodes run inference locally and store results for later synchronization. When connectivity is available, the system uploads telemetry, downloads model updates, and reconciles state. When connectivity fails, operations continue uninterrupted.

That’s what Edge-First design really means.

What I've observed over three decades is that the most underserved locations are the most in need of digital transformation. They feed, power, transport, supply, and protect all of us. Edge AI isn't waiting for 6G or standards committees to catch up. It's being built by those closest to the problem, extending intelligence and resilience to the furthest edges of the real world.

Steve Yates is CEO and Co-Founder of Federant, an open edge-first hardware and software platform for deploying and managing intelligent applications in harsh environments. Learn more at federant.com.

Recent Posts

Recent Posts

Penberthy Found Where Edge AI Wins And Most Edge Stacks Can't Run It

Penberthy Found Where Edge AI Wins And Most Edge Stacks Can't Run It

by

by

Steve Yates, P.E. (VA), CEO and Co-Founder, Federant

Steve Yates, P.E. (VA), CEO and Co-Founder, Federant

4 minutes

4 minutes

Deloitte's Physical AI Report Documents the Most Important Regression in Industrial AI

Deloitte's Physical AI Report Documents the Most Important Regression in Industrial AI

by

by

Steve Yates, P.E. (VA), CEO and Co-Founder, Federant

Steve Yates, P.E. (VA), CEO and Co-Founder, Federant

4 minutes

4 minutes

Private 5G Is Booming. It’s Also Solving the Wrong Problem.

Private 5G Is Booming. It’s Also Solving the Wrong Problem.

by

by

Steve Yates, P.E. (VA), CEO and Co-Founder, Federant

Steve Yates, P.E. (VA), CEO and Co-Founder, Federant

4 minutes

4 minutes

© 2026 Federant

© 2026 Federant