Mar 22, 2026

Mar 22, 2026

Deloitte's Physical AI Report Documents the Most Important Regression in Industrial AI

Deloitte's Physical AI Report Documents the Most Important Regression in Industrial AI

Deloitte's Physical AI Report Documents the Most Important Regression in Industrial AI

Their maturity model starts with governance solved and ends with governance as an open question. I don't think they noticed.

Their maturity model starts with governance solved and ends with governance as an open question. I don't think they noticed.

Their maturity model starts with governance solved and ends with governance as an open question. I don't think they noticed.

by

by

Steve Yates, P.E.

Steve Yates, P.E.

Steve Yates, P.E.

8 minutes

8 minutes

8 minutes

Industry

Industry

Industry

Industry

Deloitte released Physical AI: The moment of acceleration on March 18, 2026. It is one of the strongest analyses of physical AI I've read this year. Their data is compelling, their technology framework is thorough, and their conclusion, that governance is the critical emerging dimension of physical AI, is exactly right.

But the report documents a critical issue it never addresses. Its own maturity model, presented as a progression from basic automation to full autonomous physical AI, contains an architectural regression in the most safety-critical dimension of the entire stack: governance.

And the entire framework rests on a dependency it never questions: connectivity.

Stage 1: Governance solved for deterministic control

Deloitte's maturity model maps physical AI adoption across four stages. Stage 1 is automation. The foundational technologies: Programmable Logic Controllers, sensors and actuators, safety systems, and dedicated control logic. At this stage, governance is inherently local. Every safety interlock executes at the point of action. Every operational boundary resides in the same hardware that controls the process. Authority never leaves the cabinet. The system works identically whether the supervisory network is up or not.

To be clear about what "solved" means here: PLCs govern deterministic processes within pre-programmed parameters. They don't handle novel conditions, they don't learn, and they don't adapt. A PLC that encounters a situation outside its programmed logic doesn't improvise. It fails to a predetermined safe state. That's a limited form of governance. But within its scope, it is complete, reliable, and local. The governance problem PLCs were designed to solve, enforcing operational boundaries at the point of action without external dependencies, was solved. Completely.

I would know. I designed these systems.

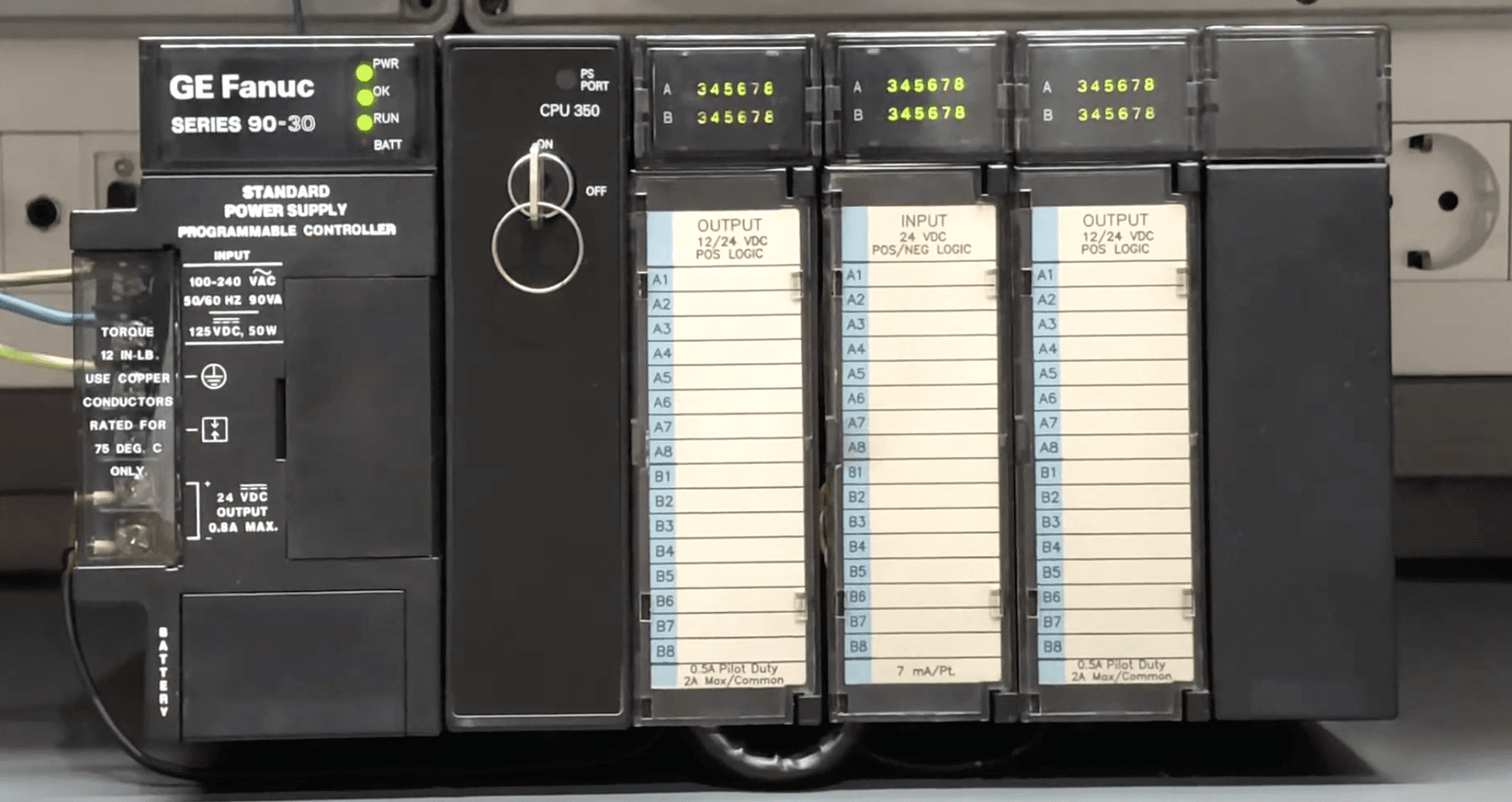

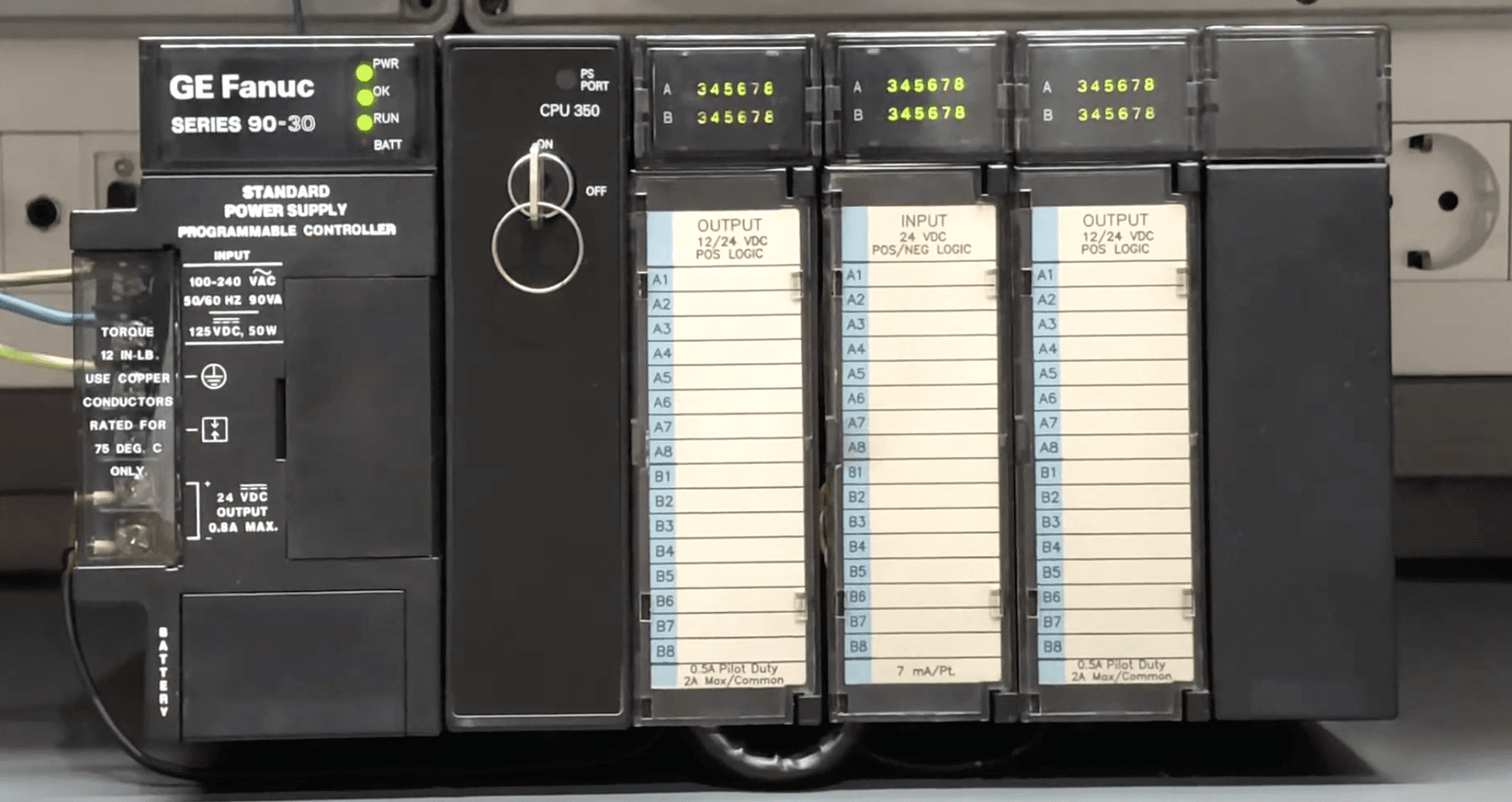

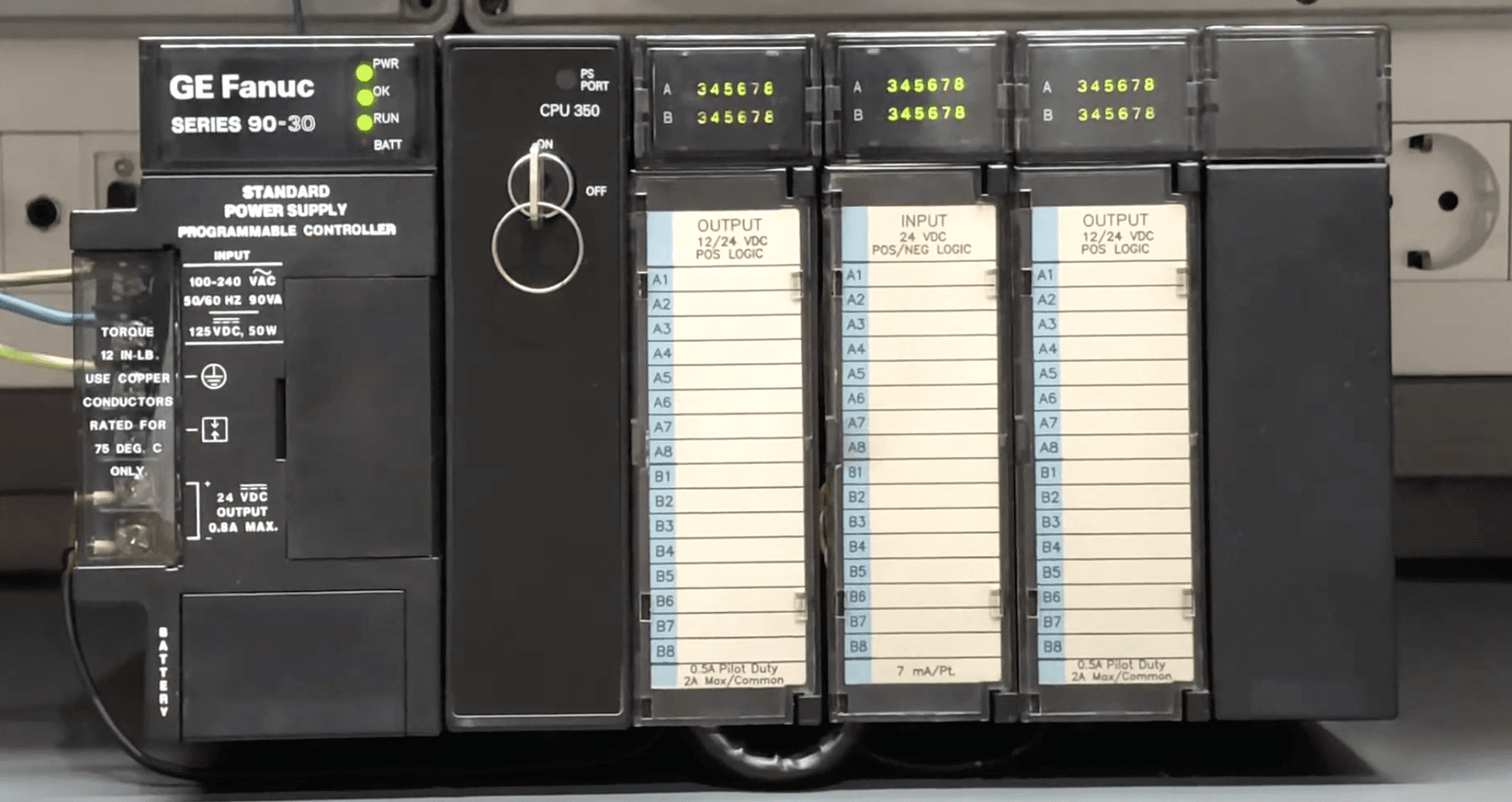

Thirty-two years ago at GE Fanuc Automation, I engineered CPU and I/O modules for PLCs including the Series 90-30. The non-negotiable bedrock principle: governance lives with the process. If the supervisory layer loses communication, the PLC keeps running within its governed parameters. If a safety interlock trips, it trips locally. No round trip to a server. No dependency on a network link. No cloud service that might be experiencing latency or an outage. Not 99.9% or 99.999%. Governance availability was 100% by design, because the governance and the governed were never separated by a network.

This wasn't a design limitation. It was the design principle. And it worked for decades, across every industrial environment imaginable: refineries, power plants, water treatment facilities, manufacturing lines, mining operations. PLCs governed safely in environments that would challenge most modern edge computing hardware. That's not a legacy constraint. That is hard-won engineering wisdom that has kept industrial automation safe for over half a century.

That is Stage 1 in Deloitte's maturity model.

Stages 2 and 3: Authority migrates quietly

Stage 2 introduces collaborative digitalization: networked sensors, IIoT gateways, edge computing nodes. Data begins flowing upward from the shop floor to supervisory layers. Stage 3 adds digital twins and cloud integration. Operations synchronize bi-directionally with virtual replicas. The platform layer gains increasing operational influence with each stage.

But here is what matters: the PLC governance layer remains intact underneath. The cloud and platform layers at Stages 2 and 3 add data visibility, digital twins, and supervisory optimization on top of a local governance foundation that continues to function independently. When the supervisory link fails, the PLC catches it. The local safety interlocks still fire. The operational boundaries still hold. The system degrades gracefully because the original governance architecture is still in place as a safety net.

This explains why the migration of authority toward the cloud has been invisible so far. Nothing has gone wrong at Stages 2 and 3. Not because cloud-dependent governance is safe, but because local governance has been quietly covering for it.

Stage 4: A new class of ungoverned decisions

By Stage 4, Deloitte envisions full physical AI: autonomous decision-making with no human involvement, fleet orchestration platforms, vision-language-action models trained in simulation and deployed at the edge. This is the frontier.

And governance? At Stage 4, Deloitte calls it an "emerging dimension." A new class of risks involving physical safety, system autonomy, accountability, and operational control, "often arising at machine speed and beyond direct human intervention." They acknowledge that the current approach is restrictive, focused on defining where AI should not be used, with the harder question of how to safely grant autonomy still ahead.

Governance was solved for deterministic control at Stage 1 and reverts to unsolved at Stage 4. Physical AI regresses industrial governance from solved to unsolved, and calls that progress.

The regression is not that PLCs disappear at Stage 4. They may well still be there, handling basic process safety interlocks underneath. At Stages 2 and 3, losing connectivity means losing optimization but keeping safety. That's graceful degradation and it has worked well. The concern at Stage 4 is different: autonomous AI decisions increasingly have direct physical consequences beyond optimization and beyond what PLC interlocks were designed to catch.

The regression is that Stage 4 introduces an entire class of autonomous decisions that PLCs were never designed to govern. A PLC can enforce "shut down the pump if pressure exceeds 150 PSI." A PLC cannot govern an AI vision system that reclassifies an obstacle and chooses an alternate route. It cannot govern a fleet orchestration model that reassigns task priorities across autonomous vehicles. These are non-deterministic decisions made by AI models operating above the PLC layer, and the governance of those decisions (the policies, the boundaries, the attestation, the explainability) lives in the platform layer. On the other side of a network link.

The PLC safety net still catches catastrophic process failures. But it cannot govern AI operational behavior. The gap between what local safety systems can enforce and what autonomous AI actually decides is where governance vanishes under partition. And that gap grows with every Stage 4 capability added to the stack.

There is a critical distinction here that the industry frequently conflates: running the AI model at the edge is not the same as governing it there. Many modern physical AI architectures execute inference locally. NVIDIA's Jetson platform, for example, runs models on the device. But a system can execute inference at the edge while its policy enforcement, decision attestation, and operational boundaries still depend on a cloud platform. Edge inference solves latency and data sovereignty. By itself, it does not solve governance under partition. If the policies, boundaries, and attestation depend on connectivity, the governance is cloud-dependent regardless of where the model runs.

The question they didn't ask

In the bottlenecks section, Deloitte asks whether legacy control systems, including PLCs, SCADA, and MES, can integrate with AI orchestration platforms. They frame this as a readiness question: can the legacy infrastructure plug into the new architecture?

But the question that actually matters is the opposite: does the new architecture carry forward the governance principle that made the old systems safe? Not for basic process safety, which PLCs may still handle, but for the new autonomous decisions that only exist at Stage 4.

It does not. And the report leaves this entirely unexplored.

Deloitte states directly that without IIoT connectivity, physical AI cannot operate at scale. Connectivity isn't mentioned as a variable or a risk factor. It is stated as a prerequisite.

Now put a Stage 4 physical AI system into the environments where it creates the most value. On an offshore platform where satellite connectivity is intermittent. In an underground mine where communication infrastructure degrades as operations move deeper. On an agricultural site where the nearest IT support is hours away and where the carrier's SLA allows nearly 9 hours of downtime per year (based on Starlink's published business-class SLA of 99.9% uptime, which excludes outages under 60 seconds, weather events, and physical obstructions). In a disaster response zone where the communications network is the first infrastructure to fail and the last to be restored.

When the link goes down, what governs the autonomous AI decisions above the PLC layer? Where do the policies execute? Where do the decision attestation logs go? How does the system reconcile its autonomous actions with fleet-level policy when connectivity restores?

The report doesn't ask because it treats connectivity as a prerequisite rather than a variable. That single assumption is the foundation the entire framework is built on. And it is precisely the assumption that breaks in the environments where physical AI matters most.

The numbers should alarm us

Deloitte's companion State of AI in the Enterprise 2026 survey provides the scale. Fifty-eight percent of companies are already using physical AI. Adoption is projected to reach 80% within two years. Integration is forecast to grow six-fold.

But only 21% of companies report having a mature governance model for autonomous AI agents. Four out of five organizations are scaling physical AI with governance that is either immature or absent.

Most deployments today are still at Stages 1 through 3, where the PLC safety net holds. This is a temporary state. The architectural regression described here has not yet manifested at scale, but the trajectory is clear and governance architectures take years to design, test, and deploy. The time to solve Stage 4 governance is before Stage 4 scales, not after. Retrofitting governance after deployment is how incidents happen.

Every deployment that reaches Stage 4 without local governance for autonomous AI decisions is accumulating a liability the industry hasn't named yet. More on that soon.

The principle that should carry forward

Deloitte's report is excellent. Their identification of governance as the emerging dimension of physical AI is an important contribution the industry needs to hear.

But the governance lesson of Stage 1 should be the design requirement for Stage 4, not an open question. The engineers who designed PLCs understood something the physical AI industry is now unlearning: authority must reside where consequences occur. That principle wasn't a limitation of older technology. It was the insight that made industrial automation safe enough to trust with physical processes that can injure people and damage equipment.

Physical AI raises the stakes. Non-deterministic AI models can behave unpredictably in ways deterministic PLCs never could. That makes local governance authority more important at Stage 4 than it was at Stage 1, not less. The answer is not to slow physical AI adoption. The answer is to design governance architectures that extend the PLC principle into Stage 4: governance that executes locally at the point of action, operates fully under network partition, and reconciles with fleet-level policy when connectivity restores.

Not governance that replaces PLCs. Governance that covers the gap above them. Not edge inference, which solves where the model runs. Edge governance, which solves where authority lives when the link goes down.

That is what we are building at Federant. The PLC principle carried forward into the age of physical AI. Governance at the point of action. Connected or not.

There is no precedent in industrial operations for accepting off-site governance as the sole authority over safety-critical autonomous systems, and no evidence that the engineers responsible for these environments will start now. Physical AI doesn't change that requirement. It raises the stakes.

Steve Yates, P.E. (VA) is CEO and Co-Founder of Federant, which is developing open governance infrastructure for autonomous edge AI. He previously founded ADI Engineering, a global OEM edge networking supplier, and led its acquisition by Silicom Ltd. He holds 9 US patents in edge networking and cybersecurity with 11 additional patents pending in AI governance infrastructure.

Deloitte released Physical AI: The moment of acceleration on March 18, 2026. It is one of the strongest analyses of physical AI I've read this year. Their data is compelling, their technology framework is thorough, and their conclusion, that governance is the critical emerging dimension of physical AI, is exactly right.

But the report documents a critical issue it never addresses. Its own maturity model, presented as a progression from basic automation to full autonomous physical AI, contains an architectural regression in the most safety-critical dimension of the entire stack: governance.

And the entire framework rests on a dependency it never questions: connectivity.

Stage 1: Governance solved for deterministic control

Deloitte's maturity model maps physical AI adoption across four stages. Stage 1 is automation. The foundational technologies: Programmable Logic Controllers, sensors and actuators, safety systems, and dedicated control logic. At this stage, governance is inherently local. Every safety interlock executes at the point of action. Every operational boundary resides in the same hardware that controls the process. Authority never leaves the cabinet. The system works identically whether the supervisory network is up or not.

To be clear about what "solved" means here: PLCs govern deterministic processes within pre-programmed parameters. They don't handle novel conditions, they don't learn, and they don't adapt. A PLC that encounters a situation outside its programmed logic doesn't improvise. It fails to a predetermined safe state. That's a limited form of governance. But within its scope, it is complete, reliable, and local. The governance problem PLCs were designed to solve, enforcing operational boundaries at the point of action without external dependencies, was solved. Completely.

I would know. I designed these systems.

Thirty-two years ago at GE Fanuc Automation, I engineered CPU and I/O modules for PLCs including the Series 90-30. The non-negotiable bedrock principle: governance lives with the process. If the supervisory layer loses communication, the PLC keeps running within its governed parameters. If a safety interlock trips, it trips locally. No round trip to a server. No dependency on a network link. No cloud service that might be experiencing latency or an outage. Not 99.9% or 99.999%. Governance availability was 100% by design, because the governance and the governed were never separated by a network.

This wasn't a design limitation. It was the design principle. And it worked for decades, across every industrial environment imaginable: refineries, power plants, water treatment facilities, manufacturing lines, mining operations. PLCs governed safely in environments that would challenge most modern edge computing hardware. That's not a legacy constraint. That is hard-won engineering wisdom that has kept industrial automation safe for over half a century.

That is Stage 1 in Deloitte's maturity model.

Stages 2 and 3: Authority migrates quietly

Stage 2 introduces collaborative digitalization: networked sensors, IIoT gateways, edge computing nodes. Data begins flowing upward from the shop floor to supervisory layers. Stage 3 adds digital twins and cloud integration. Operations synchronize bi-directionally with virtual replicas. The platform layer gains increasing operational influence with each stage.

But here is what matters: the PLC governance layer remains intact underneath. The cloud and platform layers at Stages 2 and 3 add data visibility, digital twins, and supervisory optimization on top of a local governance foundation that continues to function independently. When the supervisory link fails, the PLC catches it. The local safety interlocks still fire. The operational boundaries still hold. The system degrades gracefully because the original governance architecture is still in place as a safety net.

This explains why the migration of authority toward the cloud has been invisible so far. Nothing has gone wrong at Stages 2 and 3. Not because cloud-dependent governance is safe, but because local governance has been quietly covering for it.

Stage 4: A new class of ungoverned decisions

By Stage 4, Deloitte envisions full physical AI: autonomous decision-making with no human involvement, fleet orchestration platforms, vision-language-action models trained in simulation and deployed at the edge. This is the frontier.

And governance? At Stage 4, Deloitte calls it an "emerging dimension." A new class of risks involving physical safety, system autonomy, accountability, and operational control, "often arising at machine speed and beyond direct human intervention." They acknowledge that the current approach is restrictive, focused on defining where AI should not be used, with the harder question of how to safely grant autonomy still ahead.

Governance was solved for deterministic control at Stage 1 and reverts to unsolved at Stage 4. Physical AI regresses industrial governance from solved to unsolved, and calls that progress.

The regression is not that PLCs disappear at Stage 4. They may well still be there, handling basic process safety interlocks underneath. At Stages 2 and 3, losing connectivity means losing optimization but keeping safety. That's graceful degradation and it has worked well. The concern at Stage 4 is different: autonomous AI decisions increasingly have direct physical consequences beyond optimization and beyond what PLC interlocks were designed to catch.

The regression is that Stage 4 introduces an entire class of autonomous decisions that PLCs were never designed to govern. A PLC can enforce "shut down the pump if pressure exceeds 150 PSI." A PLC cannot govern an AI vision system that reclassifies an obstacle and chooses an alternate route. It cannot govern a fleet orchestration model that reassigns task priorities across autonomous vehicles. These are non-deterministic decisions made by AI models operating above the PLC layer, and the governance of those decisions (the policies, the boundaries, the attestation, the explainability) lives in the platform layer. On the other side of a network link.

The PLC safety net still catches catastrophic process failures. But it cannot govern AI operational behavior. The gap between what local safety systems can enforce and what autonomous AI actually decides is where governance vanishes under partition. And that gap grows with every Stage 4 capability added to the stack.

There is a critical distinction here that the industry frequently conflates: running the AI model at the edge is not the same as governing it there. Many modern physical AI architectures execute inference locally. NVIDIA's Jetson platform, for example, runs models on the device. But a system can execute inference at the edge while its policy enforcement, decision attestation, and operational boundaries still depend on a cloud platform. Edge inference solves latency and data sovereignty. By itself, it does not solve governance under partition. If the policies, boundaries, and attestation depend on connectivity, the governance is cloud-dependent regardless of where the model runs.

The question they didn't ask

In the bottlenecks section, Deloitte asks whether legacy control systems, including PLCs, SCADA, and MES, can integrate with AI orchestration platforms. They frame this as a readiness question: can the legacy infrastructure plug into the new architecture?

But the question that actually matters is the opposite: does the new architecture carry forward the governance principle that made the old systems safe? Not for basic process safety, which PLCs may still handle, but for the new autonomous decisions that only exist at Stage 4.

It does not. And the report leaves this entirely unexplored.

Deloitte states directly that without IIoT connectivity, physical AI cannot operate at scale. Connectivity isn't mentioned as a variable or a risk factor. It is stated as a prerequisite.

Now put a Stage 4 physical AI system into the environments where it creates the most value. On an offshore platform where satellite connectivity is intermittent. In an underground mine where communication infrastructure degrades as operations move deeper. On an agricultural site where the nearest IT support is hours away and where the carrier's SLA allows nearly 9 hours of downtime per year (based on Starlink's published business-class SLA of 99.9% uptime, which excludes outages under 60 seconds, weather events, and physical obstructions). In a disaster response zone where the communications network is the first infrastructure to fail and the last to be restored.

When the link goes down, what governs the autonomous AI decisions above the PLC layer? Where do the policies execute? Where do the decision attestation logs go? How does the system reconcile its autonomous actions with fleet-level policy when connectivity restores?

The report doesn't ask because it treats connectivity as a prerequisite rather than a variable. That single assumption is the foundation the entire framework is built on. And it is precisely the assumption that breaks in the environments where physical AI matters most.

The numbers should alarm us

Deloitte's companion State of AI in the Enterprise 2026 survey provides the scale. Fifty-eight percent of companies are already using physical AI. Adoption is projected to reach 80% within two years. Integration is forecast to grow six-fold.

But only 21% of companies report having a mature governance model for autonomous AI agents. Four out of five organizations are scaling physical AI with governance that is either immature or absent.

Most deployments today are still at Stages 1 through 3, where the PLC safety net holds. This is a temporary state. The architectural regression described here has not yet manifested at scale, but the trajectory is clear and governance architectures take years to design, test, and deploy. The time to solve Stage 4 governance is before Stage 4 scales, not after. Retrofitting governance after deployment is how incidents happen.

Every deployment that reaches Stage 4 without local governance for autonomous AI decisions is accumulating a liability the industry hasn't named yet. More on that soon.

The principle that should carry forward

Deloitte's report is excellent. Their identification of governance as the emerging dimension of physical AI is an important contribution the industry needs to hear.

But the governance lesson of Stage 1 should be the design requirement for Stage 4, not an open question. The engineers who designed PLCs understood something the physical AI industry is now unlearning: authority must reside where consequences occur. That principle wasn't a limitation of older technology. It was the insight that made industrial automation safe enough to trust with physical processes that can injure people and damage equipment.

Physical AI raises the stakes. Non-deterministic AI models can behave unpredictably in ways deterministic PLCs never could. That makes local governance authority more important at Stage 4 than it was at Stage 1, not less. The answer is not to slow physical AI adoption. The answer is to design governance architectures that extend the PLC principle into Stage 4: governance that executes locally at the point of action, operates fully under network partition, and reconciles with fleet-level policy when connectivity restores.

Not governance that replaces PLCs. Governance that covers the gap above them. Not edge inference, which solves where the model runs. Edge governance, which solves where authority lives when the link goes down.

That is what we are building at Federant. The PLC principle carried forward into the age of physical AI. Governance at the point of action. Connected or not.

There is no precedent in industrial operations for accepting off-site governance as the sole authority over safety-critical autonomous systems, and no evidence that the engineers responsible for these environments will start now. Physical AI doesn't change that requirement. It raises the stakes.

Steve Yates, P.E. (VA) is CEO and Co-Founder of Federant, which is developing open governance infrastructure for autonomous edge AI. He previously founded ADI Engineering, a global OEM edge networking supplier, and led its acquisition by Silicom Ltd. He holds 9 US patents in edge networking and cybersecurity with 11 additional patents pending in AI governance infrastructure.

Deloitte released Physical AI: The moment of acceleration on March 18, 2026. It is one of the strongest analyses of physical AI I've read this year. Their data is compelling, their technology framework is thorough, and their conclusion, that governance is the critical emerging dimension of physical AI, is exactly right.

But the report documents a critical issue it never addresses. Its own maturity model, presented as a progression from basic automation to full autonomous physical AI, contains an architectural regression in the most safety-critical dimension of the entire stack: governance.

And the entire framework rests on a dependency it never questions: connectivity.

Stage 1: Governance solved for deterministic control

Deloitte's maturity model maps physical AI adoption across four stages. Stage 1 is automation. The foundational technologies: Programmable Logic Controllers, sensors and actuators, safety systems, and dedicated control logic. At this stage, governance is inherently local. Every safety interlock executes at the point of action. Every operational boundary resides in the same hardware that controls the process. Authority never leaves the cabinet. The system works identically whether the supervisory network is up or not.

To be clear about what "solved" means here: PLCs govern deterministic processes within pre-programmed parameters. They don't handle novel conditions, they don't learn, and they don't adapt. A PLC that encounters a situation outside its programmed logic doesn't improvise. It fails to a predetermined safe state. That's a limited form of governance. But within its scope, it is complete, reliable, and local. The governance problem PLCs were designed to solve, enforcing operational boundaries at the point of action without external dependencies, was solved. Completely.

I would know. I designed these systems.

Thirty-two years ago at GE Fanuc Automation, I engineered CPU and I/O modules for PLCs including the Series 90-30. The non-negotiable bedrock principle: governance lives with the process. If the supervisory layer loses communication, the PLC keeps running within its governed parameters. If a safety interlock trips, it trips locally. No round trip to a server. No dependency on a network link. No cloud service that might be experiencing latency or an outage. Not 99.9% or 99.999%. Governance availability was 100% by design, because the governance and the governed were never separated by a network.

This wasn't a design limitation. It was the design principle. And it worked for decades, across every industrial environment imaginable: refineries, power plants, water treatment facilities, manufacturing lines, mining operations. PLCs governed safely in environments that would challenge most modern edge computing hardware. That's not a legacy constraint. That is hard-won engineering wisdom that has kept industrial automation safe for over half a century.

That is Stage 1 in Deloitte's maturity model.

Stages 2 and 3: Authority migrates quietly

Stage 2 introduces collaborative digitalization: networked sensors, IIoT gateways, edge computing nodes. Data begins flowing upward from the shop floor to supervisory layers. Stage 3 adds digital twins and cloud integration. Operations synchronize bi-directionally with virtual replicas. The platform layer gains increasing operational influence with each stage.

But here is what matters: the PLC governance layer remains intact underneath. The cloud and platform layers at Stages 2 and 3 add data visibility, digital twins, and supervisory optimization on top of a local governance foundation that continues to function independently. When the supervisory link fails, the PLC catches it. The local safety interlocks still fire. The operational boundaries still hold. The system degrades gracefully because the original governance architecture is still in place as a safety net.

This explains why the migration of authority toward the cloud has been invisible so far. Nothing has gone wrong at Stages 2 and 3. Not because cloud-dependent governance is safe, but because local governance has been quietly covering for it.

Stage 4: A new class of ungoverned decisions

By Stage 4, Deloitte envisions full physical AI: autonomous decision-making with no human involvement, fleet orchestration platforms, vision-language-action models trained in simulation and deployed at the edge. This is the frontier.

And governance? At Stage 4, Deloitte calls it an "emerging dimension." A new class of risks involving physical safety, system autonomy, accountability, and operational control, "often arising at machine speed and beyond direct human intervention." They acknowledge that the current approach is restrictive, focused on defining where AI should not be used, with the harder question of how to safely grant autonomy still ahead.

Governance was solved for deterministic control at Stage 1 and reverts to unsolved at Stage 4. Physical AI regresses industrial governance from solved to unsolved, and calls that progress.

The regression is not that PLCs disappear at Stage 4. They may well still be there, handling basic process safety interlocks underneath. At Stages 2 and 3, losing connectivity means losing optimization but keeping safety. That's graceful degradation and it has worked well. The concern at Stage 4 is different: autonomous AI decisions increasingly have direct physical consequences beyond optimization and beyond what PLC interlocks were designed to catch.

The regression is that Stage 4 introduces an entire class of autonomous decisions that PLCs were never designed to govern. A PLC can enforce "shut down the pump if pressure exceeds 150 PSI." A PLC cannot govern an AI vision system that reclassifies an obstacle and chooses an alternate route. It cannot govern a fleet orchestration model that reassigns task priorities across autonomous vehicles. These are non-deterministic decisions made by AI models operating above the PLC layer, and the governance of those decisions (the policies, the boundaries, the attestation, the explainability) lives in the platform layer. On the other side of a network link.

The PLC safety net still catches catastrophic process failures. But it cannot govern AI operational behavior. The gap between what local safety systems can enforce and what autonomous AI actually decides is where governance vanishes under partition. And that gap grows with every Stage 4 capability added to the stack.

There is a critical distinction here that the industry frequently conflates: running the AI model at the edge is not the same as governing it there. Many modern physical AI architectures execute inference locally. NVIDIA's Jetson platform, for example, runs models on the device. But a system can execute inference at the edge while its policy enforcement, decision attestation, and operational boundaries still depend on a cloud platform. Edge inference solves latency and data sovereignty. By itself, it does not solve governance under partition. If the policies, boundaries, and attestation depend on connectivity, the governance is cloud-dependent regardless of where the model runs.

The question they didn't ask

In the bottlenecks section, Deloitte asks whether legacy control systems, including PLCs, SCADA, and MES, can integrate with AI orchestration platforms. They frame this as a readiness question: can the legacy infrastructure plug into the new architecture?

But the question that actually matters is the opposite: does the new architecture carry forward the governance principle that made the old systems safe? Not for basic process safety, which PLCs may still handle, but for the new autonomous decisions that only exist at Stage 4.

It does not. And the report leaves this entirely unexplored.

Deloitte states directly that without IIoT connectivity, physical AI cannot operate at scale. Connectivity isn't mentioned as a variable or a risk factor. It is stated as a prerequisite.

Now put a Stage 4 physical AI system into the environments where it creates the most value. On an offshore platform where satellite connectivity is intermittent. In an underground mine where communication infrastructure degrades as operations move deeper. On an agricultural site where the nearest IT support is hours away and where the carrier's SLA allows nearly 9 hours of downtime per year (based on Starlink's published business-class SLA of 99.9% uptime, which excludes outages under 60 seconds, weather events, and physical obstructions). In a disaster response zone where the communications network is the first infrastructure to fail and the last to be restored.

When the link goes down, what governs the autonomous AI decisions above the PLC layer? Where do the policies execute? Where do the decision attestation logs go? How does the system reconcile its autonomous actions with fleet-level policy when connectivity restores?

The report doesn't ask because it treats connectivity as a prerequisite rather than a variable. That single assumption is the foundation the entire framework is built on. And it is precisely the assumption that breaks in the environments where physical AI matters most.

The numbers should alarm us

Deloitte's companion State of AI in the Enterprise 2026 survey provides the scale. Fifty-eight percent of companies are already using physical AI. Adoption is projected to reach 80% within two years. Integration is forecast to grow six-fold.

But only 21% of companies report having a mature governance model for autonomous AI agents. Four out of five organizations are scaling physical AI with governance that is either immature or absent.

Most deployments today are still at Stages 1 through 3, where the PLC safety net holds. This is a temporary state. The architectural regression described here has not yet manifested at scale, but the trajectory is clear and governance architectures take years to design, test, and deploy. The time to solve Stage 4 governance is before Stage 4 scales, not after. Retrofitting governance after deployment is how incidents happen.

Every deployment that reaches Stage 4 without local governance for autonomous AI decisions is accumulating a liability the industry hasn't named yet. More on that soon.

The principle that should carry forward

Deloitte's report is excellent. Their identification of governance as the emerging dimension of physical AI is an important contribution the industry needs to hear.

But the governance lesson of Stage 1 should be the design requirement for Stage 4, not an open question. The engineers who designed PLCs understood something the physical AI industry is now unlearning: authority must reside where consequences occur. That principle wasn't a limitation of older technology. It was the insight that made industrial automation safe enough to trust with physical processes that can injure people and damage equipment.

Physical AI raises the stakes. Non-deterministic AI models can behave unpredictably in ways deterministic PLCs never could. That makes local governance authority more important at Stage 4 than it was at Stage 1, not less. The answer is not to slow physical AI adoption. The answer is to design governance architectures that extend the PLC principle into Stage 4: governance that executes locally at the point of action, operates fully under network partition, and reconciles with fleet-level policy when connectivity restores.

Not governance that replaces PLCs. Governance that covers the gap above them. Not edge inference, which solves where the model runs. Edge governance, which solves where authority lives when the link goes down.

That is what we are building at Federant. The PLC principle carried forward into the age of physical AI. Governance at the point of action. Connected or not.

There is no precedent in industrial operations for accepting off-site governance as the sole authority over safety-critical autonomous systems, and no evidence that the engineers responsible for these environments will start now. Physical AI doesn't change that requirement. It raises the stakes.

Steve Yates, P.E. (VA) is CEO and Co-Founder of Federant, which is developing open governance infrastructure for autonomous edge AI. He previously founded ADI Engineering, a global OEM edge networking supplier, and led its acquisition by Silicom Ltd. He holds 9 US patents in edge networking and cybersecurity with 11 additional patents pending in AI governance infrastructure.

Recent Posts

Recent Posts

Deloitte's Physical AI Report Documents the Most Important Regression in Industrial AI

Deloitte's Physical AI Report Documents the Most Important Regression in Industrial AI

by

by

Steve Yates, P.E.

Steve Yates, P.E.

8 minutes

8 minutes

Private 5G Is Booming. It’s Also Solving the Wrong Problem.

Private 5G Is Booming. It’s Also Solving the Wrong Problem.

by

by

Steve Yates, P.E.

Steve Yates, P.E.

8 minutes

8 minutes

Buffer. Orchestrate. Decide: The New Edge Playbook

Buffer. Orchestrate. Decide: The New Edge Playbook

by

by

Steve Yates, P.E.

Steve Yates, P.E.

8 minutes

8 minutes

© 2026 Federant

© 2026 Federant